Connect4 Zero

AlphaZero from Scratch

A from-scratch implementation of DeepMind's AlphaZero algorithm for Connect 4. Self-play reinforcement learning, Monte Carlo Tree Search, and neural network training.

Overview

An implementation of the AlphaZero algorithm applied to Connect 4. The system learns to play at superhuman level through pure self-play, with no human game knowledge beyond the rules.

Challenge

Most AI game agents rely on handcrafted evaluation functions or pre-existing game databases. AlphaZero demonstrated that a single algorithm could master multiple games from scratch. I wanted to understand this deeply by implementing it myself, not just using existing libraries.

Approach

Implemented Monte Carlo Tree Search (MCTS) with UCB1 exploration. Each node tracks visit counts and value estimates, with the tree expanding through simulated games.

Built a convolutional neural network that takes board state as input and outputs both a policy (move probabilities) and value (win probability). Trained on Apple Silicon with MPS acceleration.

Created a self-play pipeline: the current best model plays against itself, generating training data. The network improves, then the cycle repeats.

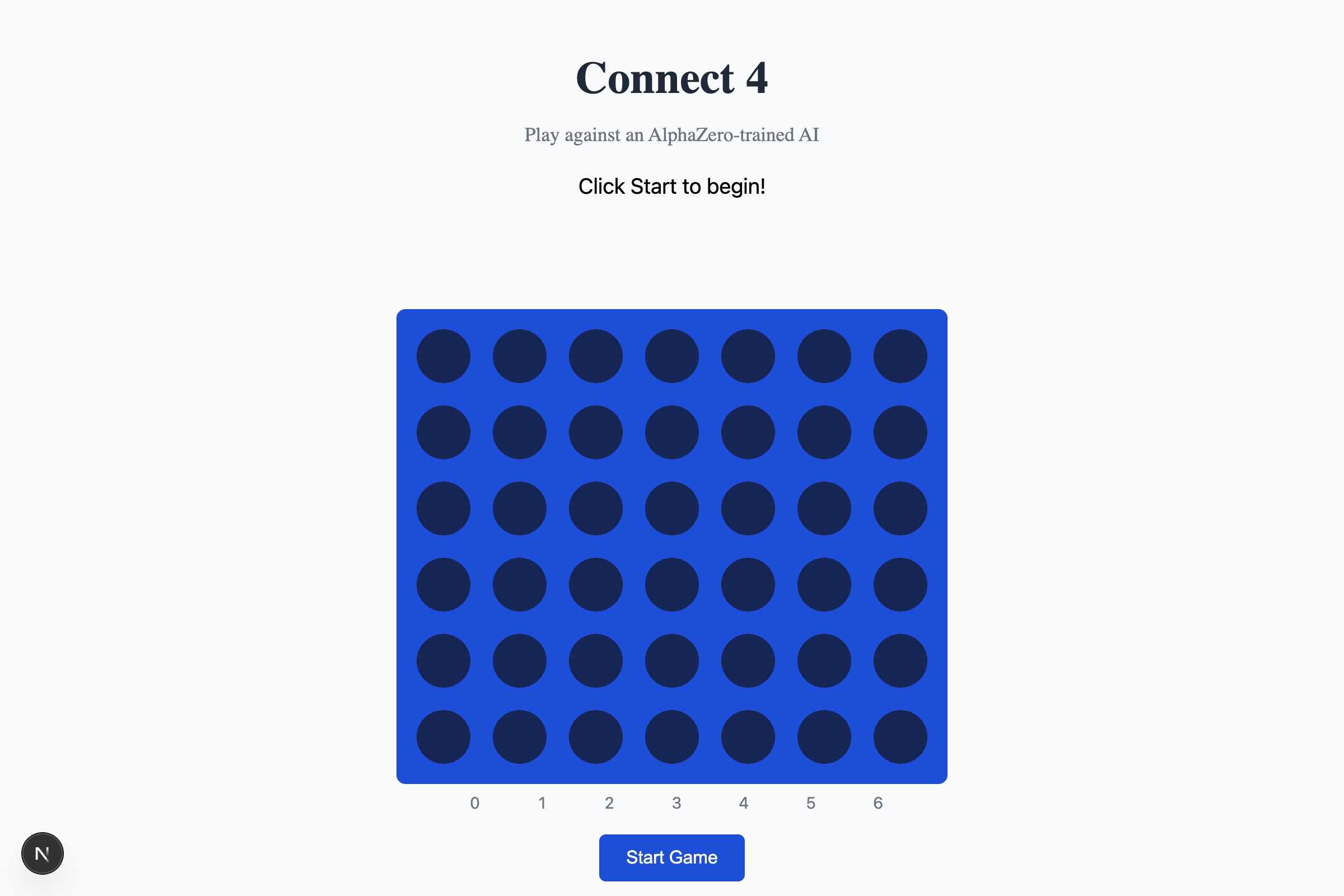

Added ONNX export for web deployment. The trained model runs entirely in-browser with no server round-trips.

Outcome

After training, the agent plays at superhuman level against traditional minimax engines. The web version lets anyone play against the trained model directly in their browser. The project solidified my understanding of RL fundamentals: policy gradients, value estimation, and the explore-exploit tradeoff.